How Amazon, Google, Broadcom, and Anthropic Are Rewriting the Rules of Frontier AI

Anthropic’s infrastructure deals with AWS, Google, and Broadcom, revealing who will control the tools that shape how we work and who gets left out.

The big news in AI last week was SpaceX and Anthropic’s new partnership, mostly because of how funny the memes of Sam Altman watching his biggest nemesis court his biggest rival were, but Anthropic has been making far bigger partnership moves long before SpaceX entered the picture.

In April alone, Anthropic signed deals that would make most infrastructure companies blush. Over $100 billion committed to AWS across the next decade. Up to 5 gigawatts of compute capacity locked in with Amazon. A separate agreement with Google and Broadcom securing next-generation chip capacity starting in 2027. To put the revenue side in context: Anthropic’s annualized revenue hit $30 billion by April, up from $9 billion just four months earlier. These are not the numbers of a software company. They’re the numbers of a company building something closer to roads. Anthropic is building physical infrastructure now, the kind that powers cities and connects continents, and the deals it signs today will determine how work gets organized for years to come.

How AWS, Google, Broadcom, and NVIDIA Turned Compute Into a Competitive Weapon

For most of software history, the scarce resource was talent and code. Write better algorithms, ship faster, win. Frontier AI has inverted that. Today, the scarce resource is compute, specifically the ability to secure it at scale before your competitors do. Anthropic now runs Claude on AWS Trainium chips, Google TPUs, and NVIDIA GPUs simultaneously, with over 1 million Trainium 2 chips in use for training and inference alone. That diversified hardware strategy means that chip access has become as strategically important as the models themselves. When Anthropic’s CFO described the Google-Broadcom deal as ‘our most significant compute commitment to date,’ the word we need to focus on is ‘commitment.’ Chips, power contracts, and cloud agreements have become the new barriers to entry, and behind those barriers sit the systems that millions of people will increasingly rely on to think, decide, and act at work.

The tools shaping how we work are getting harder to keep up with. Asaura helps you keep pace on your hardest days.

Why costs keep rising despite falling token prices

Per-token inference prices have been falling, somewhere between 5x and 10x per year for frontier models, according to MIT FutureTech researchers, and yet running frontier-level models keeps getting more expensive, rising at an estimated 3x to 18x per year. Per-token prices fall, but getting better performance demands far more tokens. If prices drop 10x and the next performance gain requires 100x more compute, the total bill climbs regardless. Epoch AI’s analysis puts the growth rate of frontier training costs at roughly 2.4x per year since 2016. At that pace, a single training run crosses the billion-dollar mark within years. Dario Amodei suggested that the threshold was already approaching in 2024. Anthropic’s current infrastructure commitments suggest he wasn’t wrong.

What this means for how we work

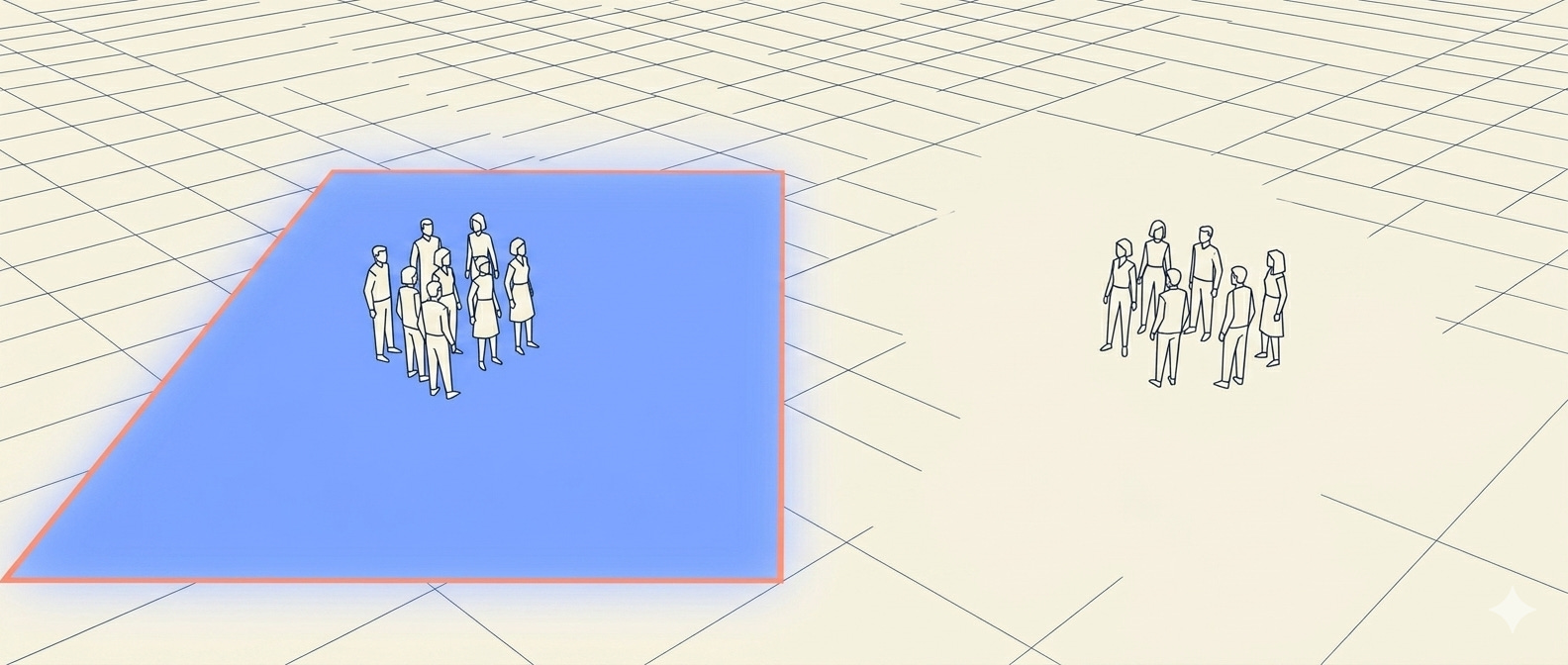

Frontier AI has entered capital-intensive territory reminiscent of cloud computing circa 2010, where the winners would be determined not just by who wrote the best software but by who built the biggest data centers. For workers and organizations, the consequences reach well beyond pricing. When the thinking layer of your work runs on infrastructure you don’t control and can’t see, you extend your mind outward onto tools you trust. Over time, you stop questioning the defaults those tools set for you. Behavioral science calls this cognitive offloading. We extend our minds outward onto tools we trust, and over time, we stop questioning the defaults those tools set for us. When those defaults are shaped by a handful of organizations with billion-dollar infrastructure bets, who controls the compute controls how work gets done.

When you build on a frontier model, you’re building on infrastructure that costs billions to sustain and is controlled by a narrowing set of players. For enterprises, the strategic reality is that access is concentrating fast. Claude is currently the only frontier model available across all three major cloud platforms: AWS, Google Cloud, and Azure. That breadth is a distribution advantage, but it also reflects how deeply compute partnerships now determine what products can exist and who can afford to use them. Workers who get access to frontier AI will think and decide differently from those who don’t, and that difference will be shaped less by individual skill or organizational intent than by the infrastructure deals being signed right now.

Frontier AI has become a cloud-and-chips business, with capital requirements that grow steeper every year, and the future of work will be organized, in no small part, around who has a seat at that table.

Scarcity - chips, DCs and power 😬

Most people haven't thought through what that actually means yet.